The year 2026 is shaping up to be a pivotal turning point in the history of quantum computing. After years of incremental progress, multiple leading technology companies — including Microsoft, IBM, and Google — have announced hardware and software milestones that collectively signal the beginning of the fault-tolerant quantum computing era.

Microsoft's announcement of its topological qubit architecture, built on exotic Majorana-based hardware, has drawn particular attention. Unlike conventional superconducting qubits, which are highly susceptible to environmental noise, topological qubits are theoretically protected against certain types of errors by their very physical structure — a property that could dramatically reduce the overhead required for quantum error correction.

Microsoft's Topological Breakthrough

Microsoft's Azure Quantum team published results demonstrating that their topological qubits can be manufactured reproducibly and exhibit the characteristic signatures of Majorana zero modes — the quantum states at the heart of the topological approach. This represents a significant step beyond the 2023 controversy over earlier Majorana claims, with the new results subject to rigorous independent verification protocols.

"We are entering a phase where quantum computers are not just research instruments — they are becoming engineering platforms capable of solving problems that matter to industry and science."

IBM and Google Push Qubit Counts and Quality

IBM's roadmap continues to advance on schedule, with its latest processor demonstrating improved quantum volume and two-qubit gate fidelities exceeding 99.5% on select qubit pairs. The company's focus has shifted from raw qubit count to quality-adjusted performance, a metric that better reflects real-world computational utility.

Google's Willow chip, announced in late 2024, has continued to demonstrate below-threshold error correction — meaning that adding more qubits actually reduces the overall error rate rather than increasing it. This is the key property required for scalable fault-tolerant quantum computation, and its confirmation in a real device marks a historic milestone.

What Fault Tolerance Means in Practice

Fault-tolerant quantum computing refers to the ability to perform arbitrarily long quantum computations despite the presence of physical errors in the hardware. It requires encoding logical qubits — the information-carrying units — across many physical qubits, with continuous error detection and correction running in parallel with the computation.

The overhead for this error correction has historically been enormous, requiring thousands of physical qubits per logical qubit. Recent advances in low-density parity check (LDPC) codes and hardware improvements have begun to reduce this overhead significantly, bringing practical fault-tolerant computation closer to reality.

Implications for Enterprise and Research

For enterprises currently evaluating quantum computing strategies, the developments of early 2026 represent a clear signal: the timeline for quantum advantage in commercially relevant problems has shortened materially. Industries including pharmaceuticals, logistics, financial modelling, and materials science are accelerating their quantum readiness programmes in response.

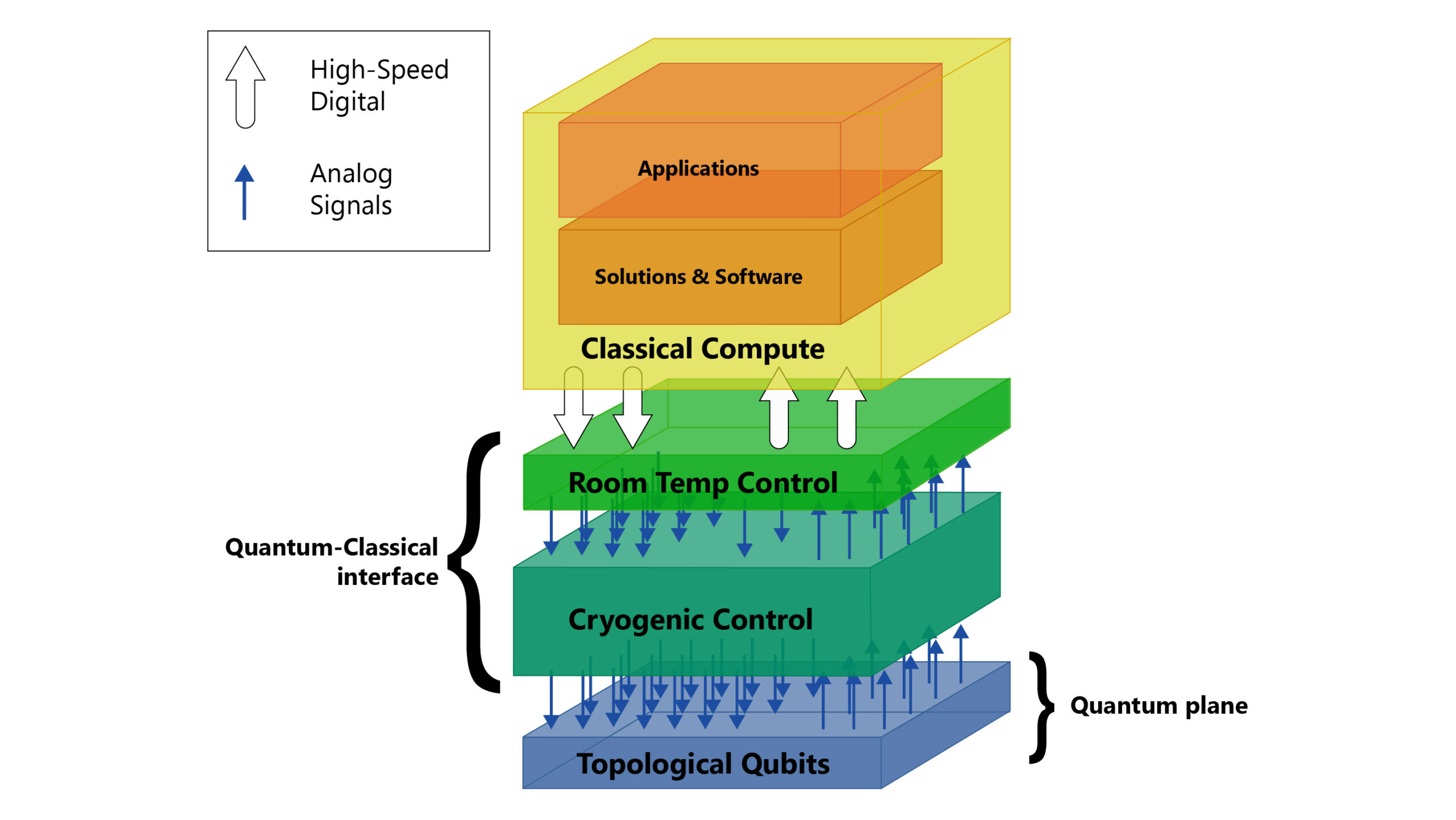

Research institutions, meanwhile, are increasingly integrating quantum processors into hybrid classical-quantum workflows, using quantum hardware for the portions of computation where it offers a genuine advantage while relying on classical systems for the remainder.